This post is about urunc, a tool that

we build to treat unikernels as containers and properly introduce unikernels to

the cloud-native world! Essentially, urunc is a container runtime able to

spawn unikernels that reside in container images. Before digging into the gory

details, let us walk through some required concepts: unikernels, containers,

and container runtimes.

What are unikernels Link to heading

Unikernels is a technology that was introduced in 2013 and has been quietly evolving for some years now. They can be seen as highly specialized, lightweight operating systems. Unlike traditional general purpose operating systems, unikernels are tailored for the singular purpose of running a specific application with efficiency, eliminating unnecessary overhead and minimizing footprint. A unikernel contains all the essential components needed for running a particular application, including the application code and the necessary portions of the operating system code. Additionally, everything that is not required for running the app is stripped out of the unikernel. That results in a self-contained, portable and minimal unit of software that can be run anywhere with virtually no overhead and significantly decreased attack surface.

Unikernels use cases Link to heading

Due to their inherent design simplicity, low footprint and near-instant spawn times, unikernels seem suitable for a number of interesting use-cases:

Serverless functions: With ultra-fast boot times and efficient execution unikernels are a great fit for the dynamic environment of serverless functions.

SaaS/microservices: In SaaS and microservices environments, unikernels provide a tailored solution by isolating individual applications, minimizing interference, and enhancing security in multi-tenant scenarios.

Edge deployments for resource-constrained devices: Due to their minimal footprint, unikernels can shine in edge computing by efficiently utilizing limited resources, ensuring optimal performance for deployment in edge devices.

Comparing unikernels and containers Link to heading

How do unikernels compare to containers, the current standard of software delivery and execution? In our experience, there are some benefits and some drawbacks when it comes to adopting unikernels. We present the basic criteria and some comments on each technology below:

Lightweight: While containers are known for their lightweight nature, unikernels take this to the next level, offering an even more streamlined and specialized environment. By eliminating non-essential components, unikernels minimize their footprint to an extent beyond what traditional containers achieve.

Portable: Both containers and unikernels maintain a similar level of portability, allowing applications to run consistently across various environments.

Efficient Resource Consumption: Efficiency in resource consumption is a shared strength between containers and unikernels. Both excel in optimizing resource usage, but unikernels, with their minimalistic design, stand out in resource-constrained environments, ensuring optimal performance with minimal overhead.

Scalable: The scalability factor remains comparable between containers and unikernels. Both can be scaled horizontally to meet increased demand, providing a responsive and adaptable infrastructure for dynamic workloads.

Isolated: Containers provide a certain level of isolation using mechanisms like cgroups and namespaces. However, unikernels benefit from hardware isolation, offering the same security as traditional VMs.

Easy to Use: Containers boast a wide and well-defined ecosystem of tools designed to package, distribute, execute and orchestrate application. Unikernels, while powerful, are not as intuitive or as easy to use for those accustomed to the simplicity of container technologies. They require a more specialized understanding of application architecture and deployment. Furthermore, there are some missing tools required to elevate unikernels to the status of a first-class citizen in the cloud-native landscape.

What is missing? Link to heading

The technology behind unikernels is a good candidate for cloud deployments,

especially in the case of Microservices and FaaS. However, an important

component is missing; the tool that will spawn unikernels and manage their

lifecycle. At the same time, industry standards, such as the Open Container

Initiative create specific requirements for such a tool. urunc aspires to

fill this gap, enabling the use of unikernels in cloud-native environments as

simply as containers.

Unikernels are not a drop-in replacement for containers. First off, there’s no simple and user-friendly way to build unikernels for specific applications (more on this coming soon). Then, there is no way to package and distribute unikernels like OCI images. Additionally, there’s no tooling to run unikernels in a way that is compatible with OCI standards.

The closest tools we have for deploying unikernels are the ones made for VMs: typical sandboxed container runtimes such as kata-containers, or VM management tools like libvirt. However, since unikernels are not typical VMs (there’s no operating system, no agent to communicate with the container runtime to setup a container in the sandbox etc.), they fall short of being real substitute for sandboxed containers.

To this end, after experimenting with the tools available (essentially kata-containers), to build a simple unikernel container runtime based on existing functionality, we felt that the time has come to take a step back, follow a much simpler design and build the unikernel container runtime: urunc.

Enter urunc Link to heading

Generic container runtimes (such as runc manage the systems stack where applications are spawned.

A unique feature of unikernels is that they are self-contained: an application runs along with its library and OS dependencies as a single address-space VM image, combining the systems stack and application. Leveraging this feature, urunc maps each application/unikernel to a single container, thus, enabling direct management of applications from the container runtime itself.

There is no notion of a VM sandbox, while at the same time, virtualization extensions ensure proper isolation between user-code (the unikernel) and the rest of the system. This is also inherent from the design of unikernels, which are, essentially, VMs.

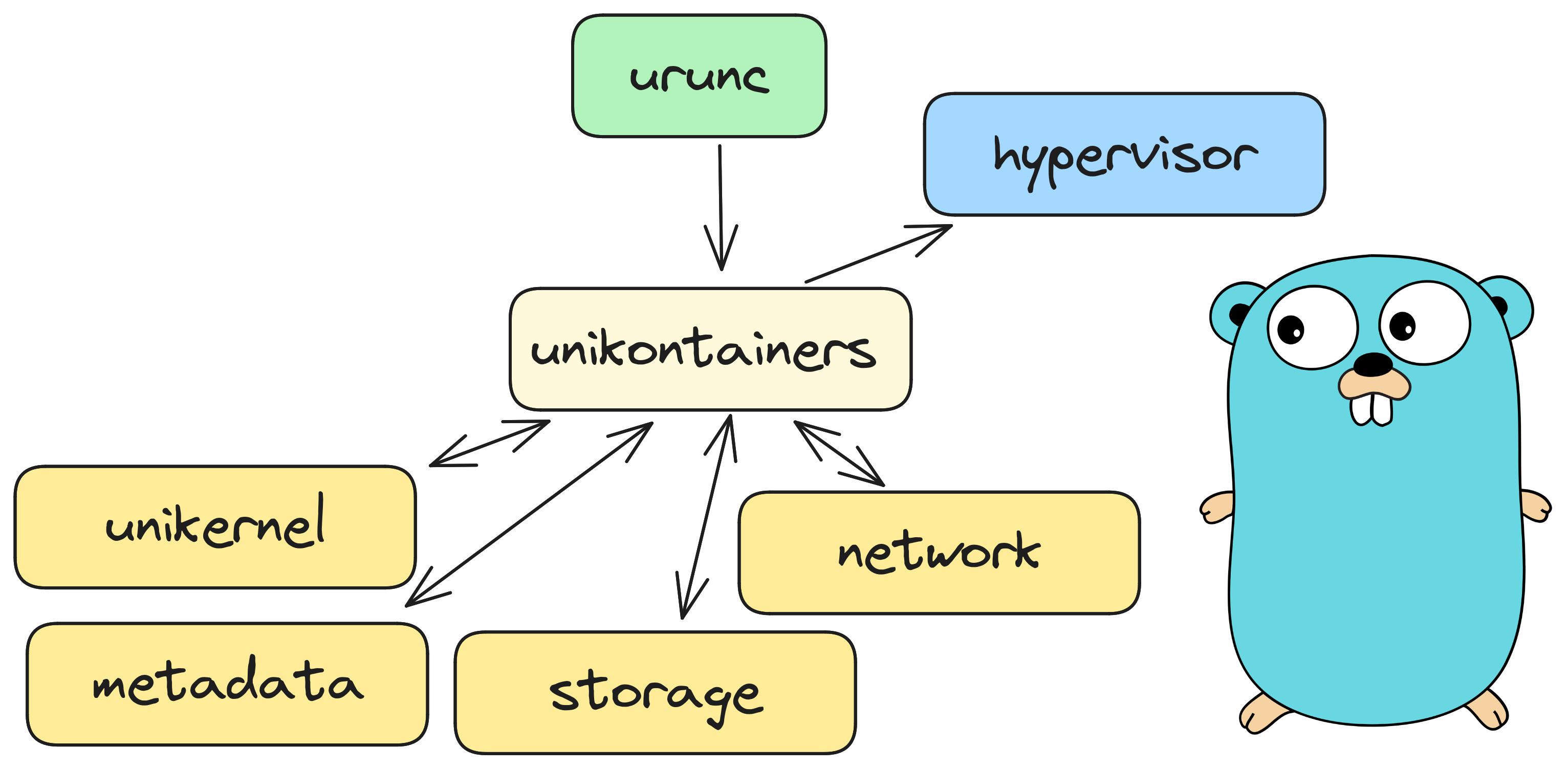

Figure 1: Abstract design of urunc

The design of urunc separates each individual component into go packages that

can be exported (and exposed) to other components if needed. Moreover, urunc

follows the

standard

container runtime design; mainly for maintainability, but for easier debugging

and extensibility as well.

OCI artifacts Link to heading

Unikernel images for urunc adhere to the Open Container Initiative (OCI) standards, making them easily shareable and deployable across compatible platforms. To facilitate the building and packaging of container images for urunc, we build bima, a simple tool that adds unikernel binaries to container image layers, and packages the artifacts as OCI-compatible images with metadata. An example of a Containerfile we use to package an nginx unikernel, built with the rumprun toolstack, over solo5, along with its accompanying files is shown below:

1# the FROM instruction will not be parsed

2FROM scratch

3

4COPY nginx.hvt /unikernel/nginx.hvt

5COPY data /

6

7LABEL com.urunc.unikernel.binary=/unikernel/nginx.hvt

8LABEL "com.urunc.unikernel.cmdline"=''

9LABEL "com.urunc.unikernel.unikernelType"="rumprun"

10LABEL "com.urunc.unikernel.hypervisor"="hvt"

Container (Unikernel) spawning Link to heading

Leveraging the power of underlying hypervisors, urunc spawns unikernel virtual machines (VMs), facilitating the deployment process. Furthermore, it is designed to be extensible making really easy to support multiple hypervisors and unikernel types.

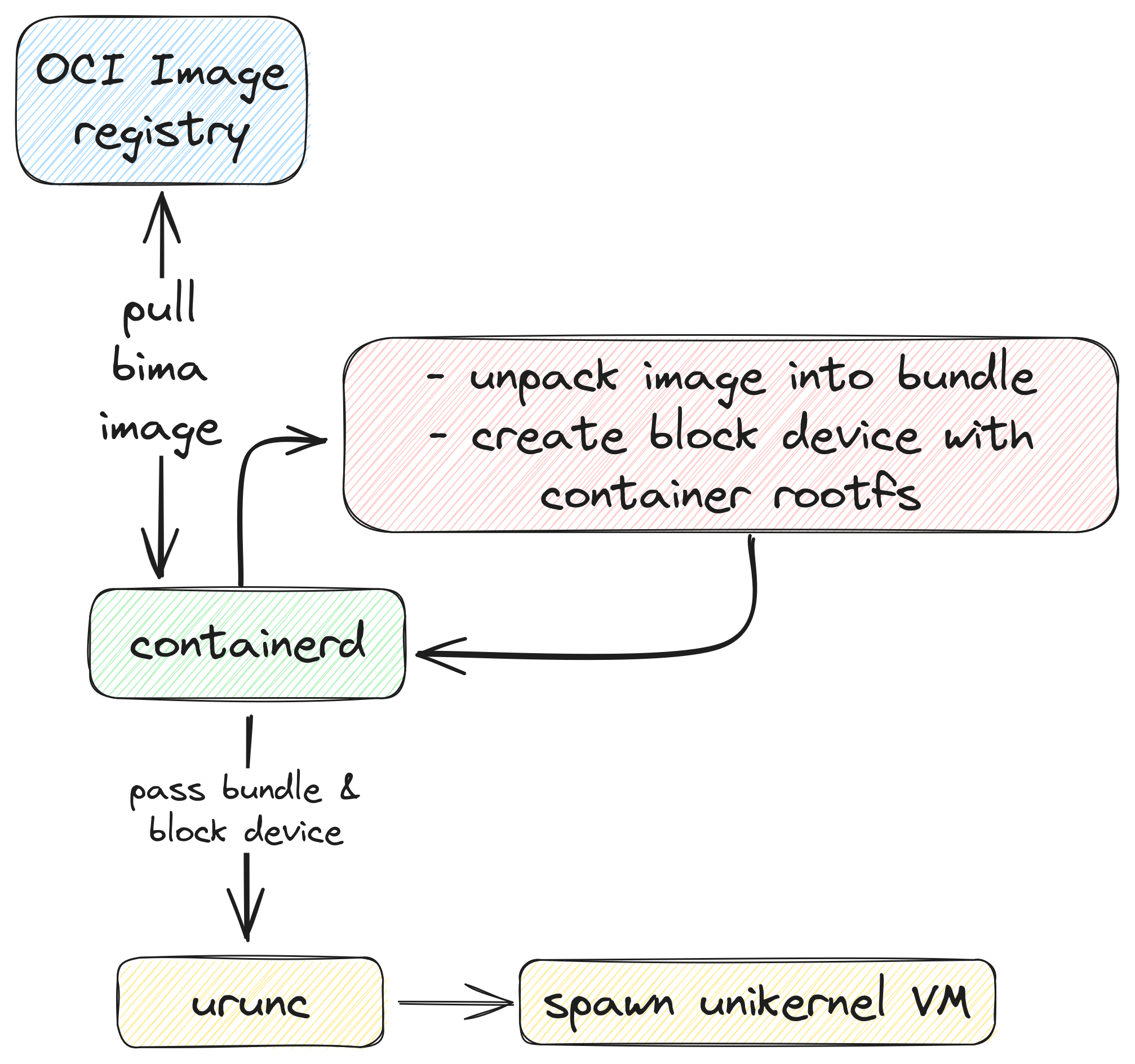

Figure 2: urunc execution flow

The high-level execution flow is as follows:

containerdpulls the image from a container registry, unpacks it and prepares the storage backend with the container rootfs (eg. a block device for thedevmappersnapshotter).containerdinvokesuruncwith the bundle & storage backenduruncparses the annotations in the bundle and extracts the unikernel binaryuruncconstructs the apropriate command-line parameters for the respective hypervisor and spawns the unikernel

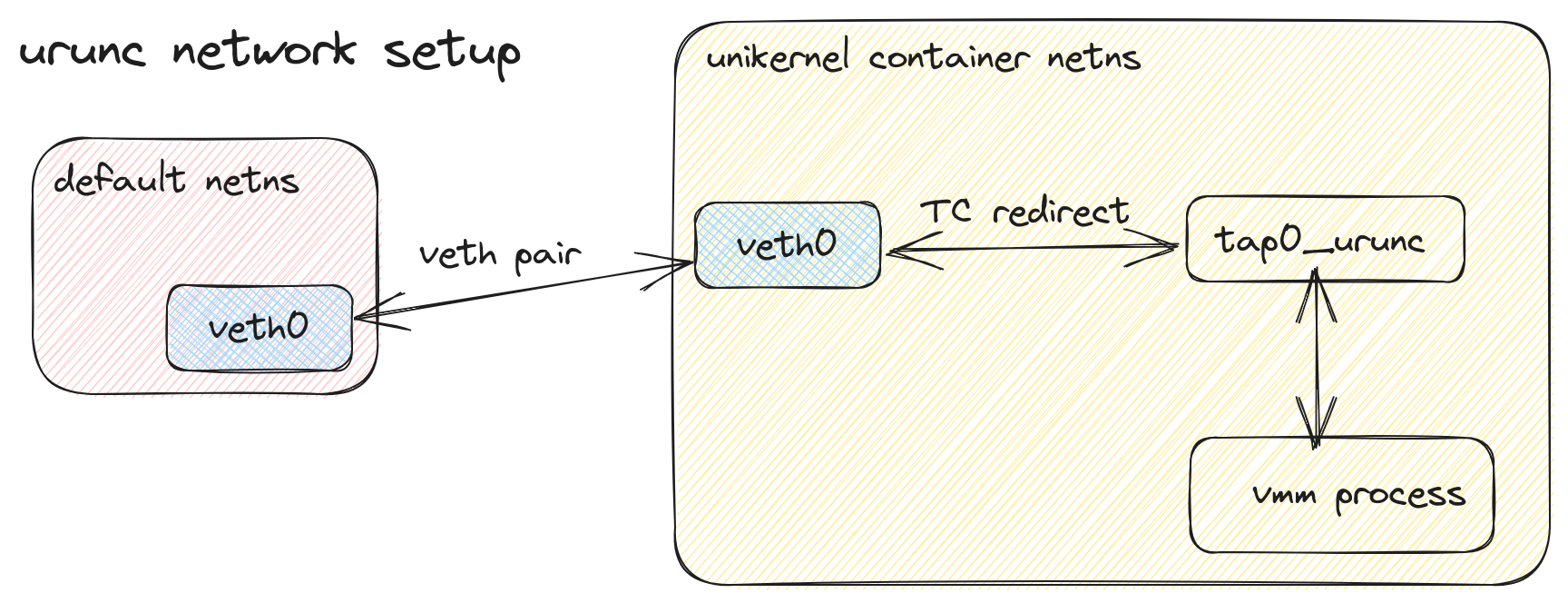

Regarding network handling, urunc creates a new tap device inside the container netns (tap0_urunc). urunc maps all incoming traffic from the CNI veth endpoint to the tap interface and all outgoing traffic to the veth endpoint. This process is shown in Figure 3.

Figure 3: urunc network flow

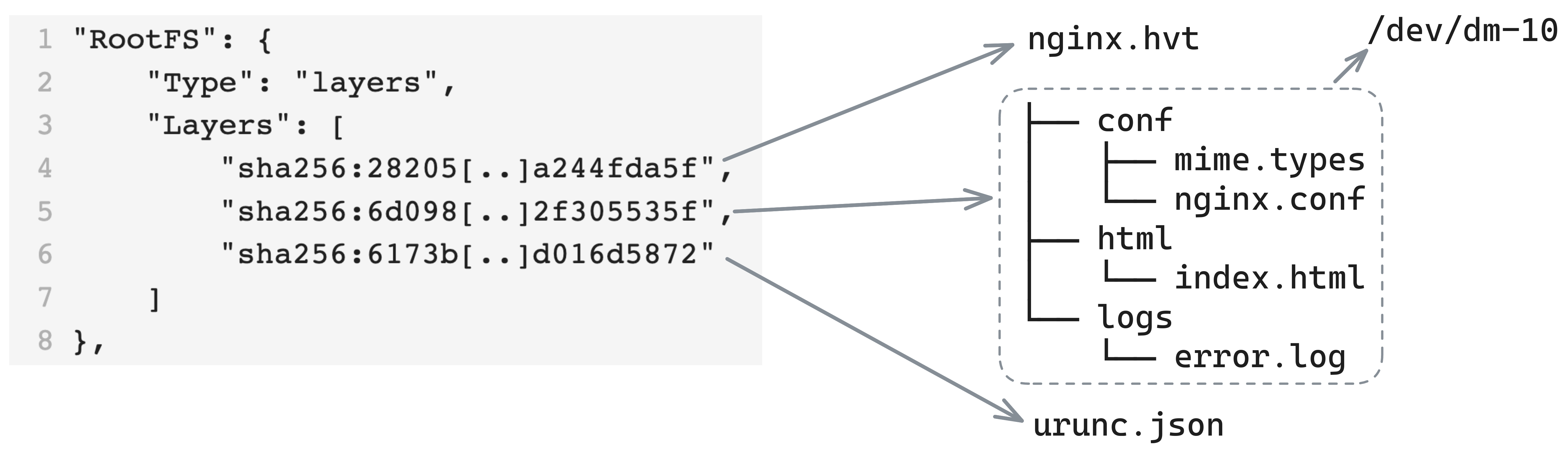

Regarding storage, urunc extracts the unikernel binary from the container image. Then, it extracts any additional files present in the container image rootfs, to be used as additional storage for the unikernel. urunc prepares and attaches the storage backend to the unikernel via the relevant command line directives of each hypervisor and unikernel type. This process is shown in Figure 4.

Figure 4: urunc storage handling

k8s integration Link to heading

One of our initial goals was to bring unikernels to the cloud-native world. With urunc we are finally able to spawn unikernels in k8s, without the burden of libvirt and its complicated integration with container runtimes and tools. urunc’s integration with k8s is as smooth as any other Container Runtime Interface (CRI)-compatible container runtime.

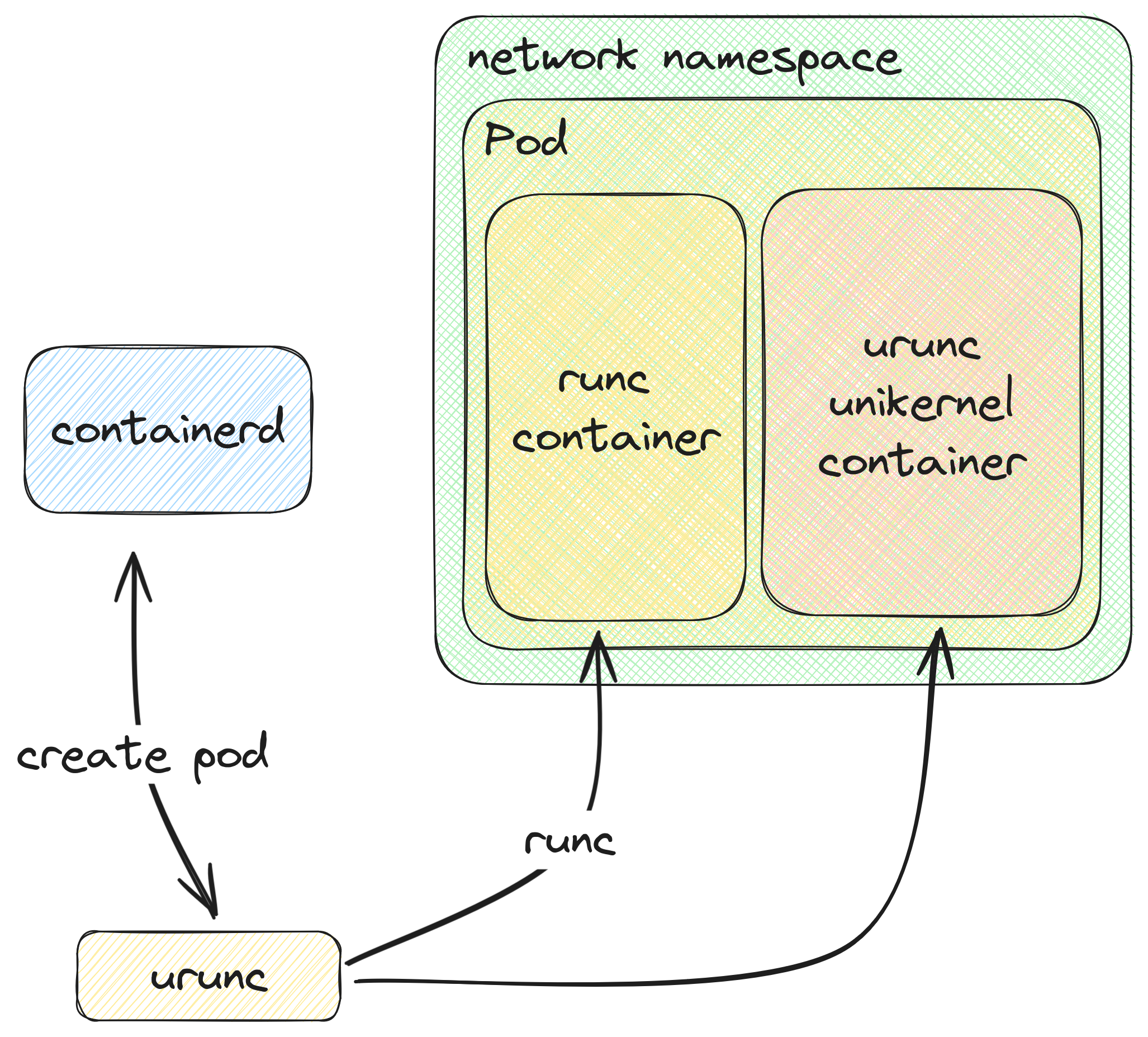

We achieved this through a minor workaround: Kubernetes primarily focuses on pods as its core abstraction, housing containers within them. To ensure resource sharing among containers within the same pod, Kubernetes initializes a container that remains idle (sleeping) to sustain active namespaces for the other containers within the pod to connect to.

So we had two options: (a) either include the handling of a generic container in urunc or (b) delegate this handling to runc.

Figure 5: urunc in k8s

We chose option (b). Figure 5 visualizes the process to spawn a unikernel container in k8s using urunc.

urunc in action Link to heading

Below you can see how we can deploy a redis key-value store

server as a rumprun unikernel on solo5 using

urunc and nerdctl.

Figure 6: A redis rumprun unikernel over solo5 spawned with nerdctl and urunc

urunc is open-source software, licensed

under Apache-2.0. Get

the code and start hacking!

Stay tuned for a hands-on post on how to run your own unikernel using urunc!